May 12, 2026

If you still think Ruby’s Array is “just a C struct with some methods on top,” you’re about 5 years out of date.

Modern MRI tells a very different story.

Today, Array sits at the intersection of:

- Ruby code (array.rb)

- VM intrinsics (Primitive.*)

- C runtime (array.c)

- JIT specialization (YJIT)

And the result is something much more interesting:

Ruby is starting to describe its own execution model.

Let’s walk through what’s actually happening under the hood.

🧩 The Illusion: “This Looks Like Ruby”

Open array.rb and you’ll see familiar methods:

def shuffle!(random: Random) Primitive.rb_ary_shuffle_bang(random)end

Looks like Ruby. It’s not.

That Primitive.* call is not a method—it’s a VM intrinsic.

This line:

Primitive.rb_ary_shuffle_bang(random)

is effectively:

“jump directly into a VM-level operation, skipping normal Ruby dispatch”

So instead of:

Ruby → method lookup → Ruby body → C

You get:

Ruby → VM intrinsic → C

No detours.

⚙️ Primitive.cexpr!: Ruby as a Thin IR Layer

Now look at first:

def first n = unspecified = true if Primitive.mandatory_only? Primitive.attr! :leaf Primitive.cexpr! %q{ ary_first(self) }

This is where things get serious.

Primitive.cexpr! means:

“replace this Ruby method body with this C expression”

So:

a.first

becomes:

ary_first(self)

with almost zero overhead.

But here’s the twist:

It’s still written in Ruby syntax.

This is not just binding to C.

It’s declaring execution to the VM.

⚡ Arity Specialization: Killing Generic Paths

Take sample:

def sample(n = (ary = false), random: Random) if Primitive.mandatory_only? Primitive.ary_sample0 else Primitive.ary_sample(random, n, ary) endend

MRI splits:

- sample

- sample(n)

into different execution paths

Why?

Because generic argument handling is slow.

So instead of:

one flexible method with branches

MRI builds:

multiple specialized entry points

This is classic JIT strategy:

- fewer conditionals

- more predictable execution

- better inlining

🔥 The Big Shift: Rewriting Core Methods for JIT

Now the real transformation begins:

with_jit do

Inside this block, MRI does something radical:

- removes existing methods

- rewrites them in Ruby

- uses only VM intrinsics

Example: each

def each Primitive.attr! :inline_block, :c_trace, :without_interrupts i = 0 until Primitive.rb_jit_ary_at_end(i) yield Primitive.rb_jit_ary_at(i) i = Primitive.rb_jit_fixnum_inc(i) end selfend

This is not idiomatic Ruby.

This is:

a hand-written, JIT-friendly loop

🧠 Why This Is Faster Than C (Sometimes)

That sounds backwards, but here’s why it works.

Old approach (C implementation)

- opaque to the JIT

- no inlining across boundary

- fixed behavior

New approach (Ruby + intrinsics)

- fully visible to YJIT

- control flow is explicit

- operations are predictable

So YJIT can turn this into something very close to:

for (i = 0; i < len; i++) { yield(array[i]);}

In other words:

Ruby code becomes compiler-friendly IR

🏎️ map / select: Manual Vector Construction

Look at map:

result = Primitive.ary_sized_alloc

This is not:

result = []

It’s:

pre-sized allocation

Then:

Primitive.rb_jit_ary_push(result, value)

This avoids:

- repeated reallocations

- capacity growth checks

- memory churn

MRI is manually building what looks like a C-style dynamic array loop.

⛔ Interrupt Suppression: Raw Throughput Mode

Every JIT-optimized method includes:

Primitive.attr! :without_interrupts

This disables:

- thread scheduling checks

- signal handling inside the loop

Why?

Because those checks are expensive.

MRI is explicitly choosing:

maximum throughput over responsiveness

for hot paths.

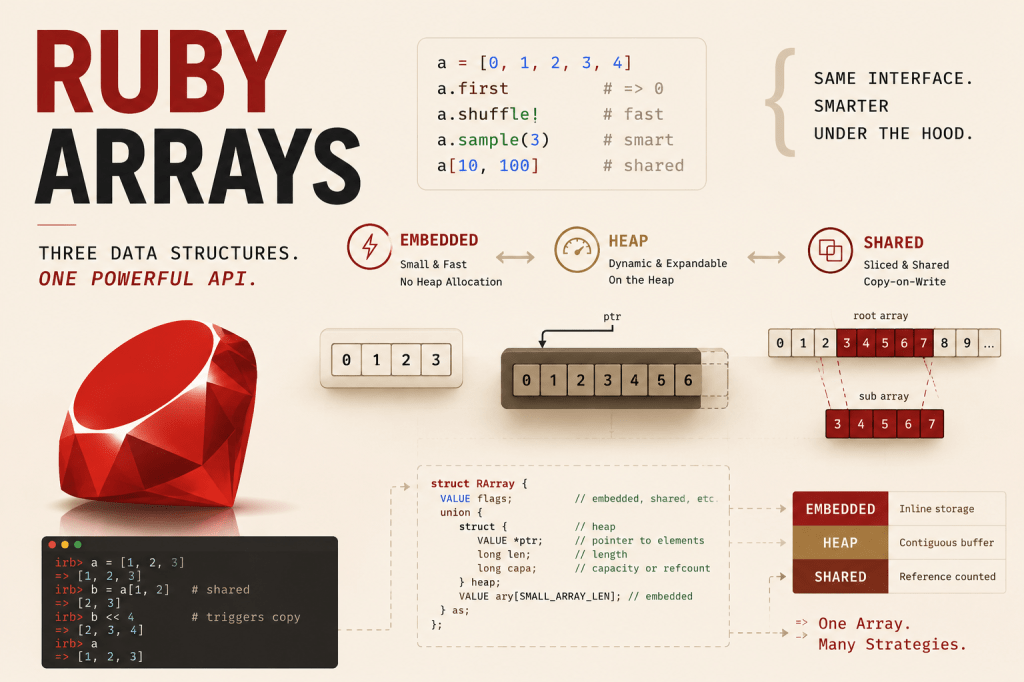

📦 Meanwhile, Underneath: Three Array Representations

All of this sits on top of array.c, where arrays are not just arrays.

They are:

RepresentationPurposeEmbeddedsmall arrays (no heap allocation)Heapstandard dynamic arraysSharedcopy-on-write slices

Embedded arrays

Small arrays live inside the object itself:

[1, 2, 3]

No malloc. No pointer chasing.

Shared arrays (copy-on-write)

slice = array[10, 100]

May not copy anything.

Instead:

- shares memory

- copies only on mutation

Classic COW optimization.

🧠 What This Really Means

This is the important part.

MRI is no longer just:

a C interpreter for Ruby

It’s becoming:

a hybrid VM where Ruby itself helps define optimized execution

We now have:

LayerRoleRuby (array.rb)VM-visible logicIntrinsics (Primitive.*)IR / operationsC (array.c)memory + core opsYJITspecialization engine

🚨 One Important Clarification

This is not a tracing JIT.

YJIT uses:

basic block versioning (BBV)

Meaning:

- compiles small regions

- specializes based on observed types

- avoids long trace recording

This keeps things:

- simpler

- more predictable

- easier to maintain

🧩 The New Mental Model

Forget:

“Array is implemented in C”

Think:

Array is a polymorphic runtime structure with JIT-specialized behavior described in Ruby

Or more bluntly:

Array is three data structures in a trench coat, driven by a VM that now understands Ruby itself

🏁 Final Takeaway

This file is not just an implementation detail.

It’s a signal.

MRI is shifting toward:

- Ruby-defined core behavior

- VM-aware intrinsics

- JIT-specialized execution paths

And in that world:

Writing Ruby the right way can be as optimizable as writing C.