Ruby has long balanced developer happiness with safety, but parallel performance has historically been constrained by the Global VM Lock (GVL). Ractors — introduced in Ruby 3 — were the first serious attempt to bring true multicore parallelism to MRI without sacrificing thread safety.

With Ruby 4, the introduction of Ractor::Port significantly improves how Ractors communicate, making actor-style architectures far more practical.

This article explains what changed, why it matters, and when you should actually consider using it.

Why Ractors Exist (and Why Threads Aren’t Enough)

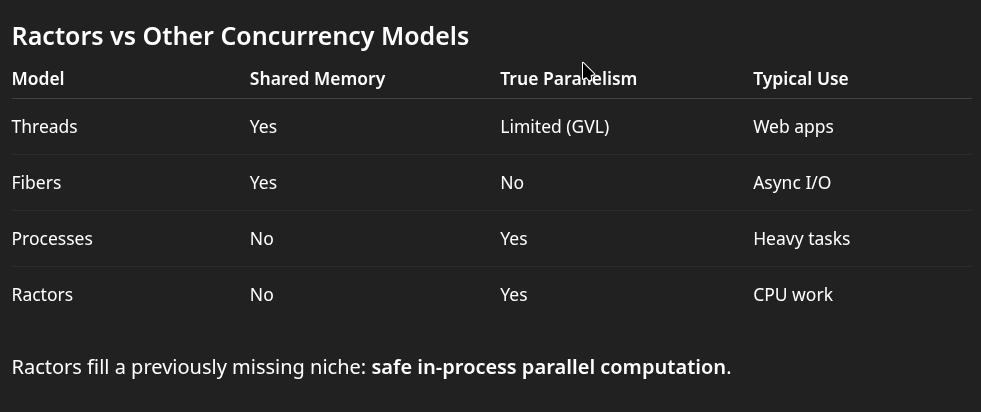

Ruby threads share memory and therefore must coordinate access to mutable objects. MRI enforces the GVL to prevent race conditions, which limits CPU-bound parallelism.

Ractors solve this differently:

✔ Separate heaps (no shared mutable state) ✔ Message passing instead of memory sharing ✔ True parallel execution across cores

Conceptually, Ractors are closer to actors (Erlang, Akka) than to threads.

The Problem with the Original Ractor API

Early Ractor communication relied on implicit mailboxes:

r = Ractor.new do Ractor.yield "hello"endr.take # => "hello"

This works for simple cases, but becomes awkward when building real systems:

- Tight coupling between sender and receiver

- Hard to build pipelines

- Difficult fan-out / fan-in patterns

- Communication topology not explicit

In other words: fine for demos, not ideal for architectures.

Enter Ractor::Port: Explicit Channels

Ruby 4 introduces ports, which behave like standalone communication endpoints.

Think of them as:

- Go channels

- Erlang mailboxes

- UNIX pipes

- Actor message queues

Minimal Example

port = Ractor::Port.newworker = Ractor.new(port) do |p| p.send("Hello from another CPU core")endputs port.receive

Key improvements:

✔ Communication is explicit ✔ Producers and consumers are decoupled ✔ Multiple Ractors can share a port ✔ Enables complex topologies

Building Real Concurrent Pipelines

Ports shine when you move beyond toy examples.

Parallel Processing Pipeline

input = Ractor::Port.newoutput = Ractor::Port.newworker = Ractor.new(input, output) do |in_p, out_p| loop do value = in_p.receive out_p.send(value * 2) endendinput.send(21)puts output.receive # => 42

This pattern resembles streaming systems used in:

- ETL pipelines

- data processing engines

- real-time analytics

- background computation services

Fan-Out: One Producer, Many Workers

Parallel job processing becomes straightforward.

tasks = Ractor::Port.newresults = Ractor::Port.newworkers = 4.times.map do Ractor.new(tasks, results) do |t, r| loop do job = t.receive r.send(job * job) end endend10.times { |i| tasks.send(i) }10.times { puts results.receive }

Multiple Ractors pull work from the same queue — true multicore execution.

Fan-In: Many Producers, One Consumer

Ports also support aggregation patterns.

port = Ractor::Port.new3.times do |i| Ractor.new(port, i) do |p, id| p.send("message from #{id}") endend3.times { puts port.receive }

Safety Model: No Shared Mutable State

Ractors enforce isolation.

Objects sent between Ractors must be:

- Immutable

- Shareable

- Or copied

Unsafe transfer:

data = []port.send(data) # may fail

Safe transfer:

port.send(data.freeze)

This constraint eliminates data races by design.

When Ractors Actually Make Sense

Despite the excitement, Ractors are not a drop-in replacement for threads.

Excellent Use Cases

✔ CPU-bound workloads

✔ Image / video processing

✔ Cryptography

✔ Scientific computation

✔ Simulation engines

✔ Parallel data transformation

Poor Use Cases

❌ Typical Rails request handling

❌ Network I/O concurrency

❌ Code relying on shared mutable state

❌ Libraries not designed for isolation

For web apps, threads and async I/O remain more practical.

Why Ractor::Port Matters Strategically

Ports don’t just add convenience — they enable architectures that were previously impractical in MRI:

- Actor systems

- Parallel pipelines

- Work-stealing pools

- Streaming computation

- In-process multicore engines

This moves Ruby closer to languages traditionally associated with concurrency, without abandoning Ruby’s safety guarantees.

What This Means for Rails Developers

Most Rails apps are I/O-bound and won’t benefit directly.

However, Ractors become valuable when your application includes:

- Heavy background computation

- Data processing services

- CPU-intensive analytics

- Embedded engines inside a Rails app

Instead of spawning processes or external services, some workloads can now run in parallel inside one Ruby process.

Final Thoughts

Ractor::Port is not a flashy feature, but it is a meaningful step toward practical parallelism in Ruby.

It transforms Ractors from an academic curiosity into a tool capable of expressing real concurrent architectures — particularly for CPU-bound workloads.

Ruby still prioritizes simplicity over raw performance, but the trajectory is clear: safe multicore execution without abandoning developer ergonomics.

For most applications today, threads and processes remain the default choice. But for computation-heavy systems, Ractors with ports may finally offer a native Ruby solution.